Gartner forecasts that by 2023, over 740,000 autonomous vehicles will make a presence in the market. However, for the AVs to be quickly & effectively deployed on the roads there is a huge need of training datasets, which are accurately annotated and labelled. This is where technical experts have a major role to play. Using their skills, knowledge and experience, they need to make the transition smoother and more impactful.

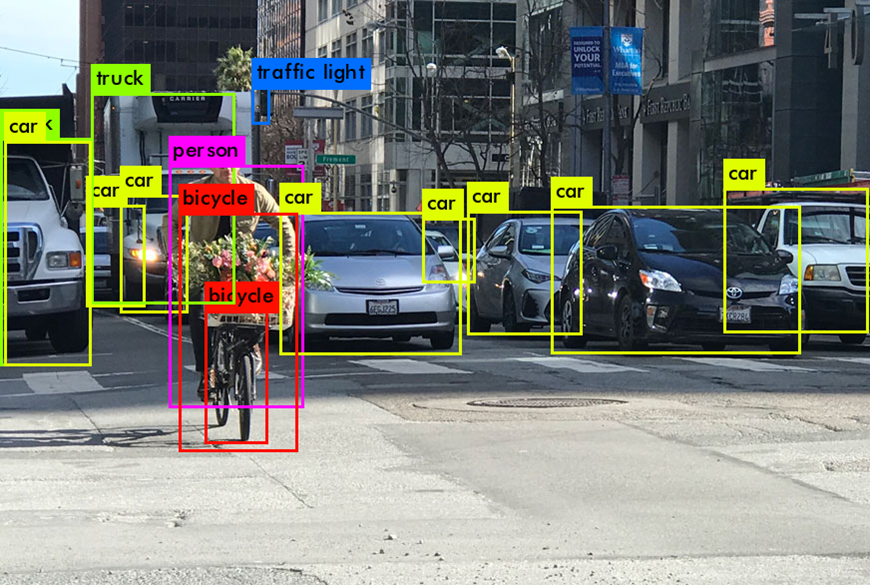

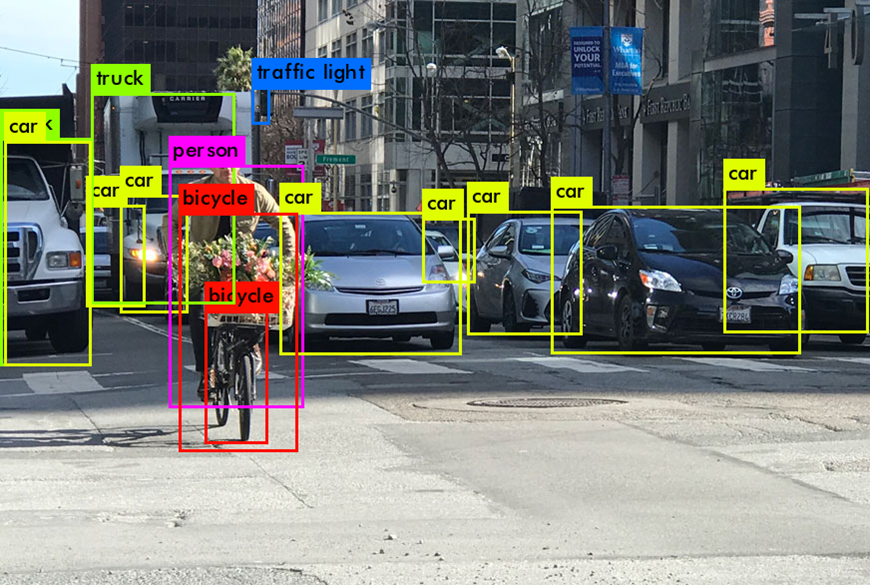

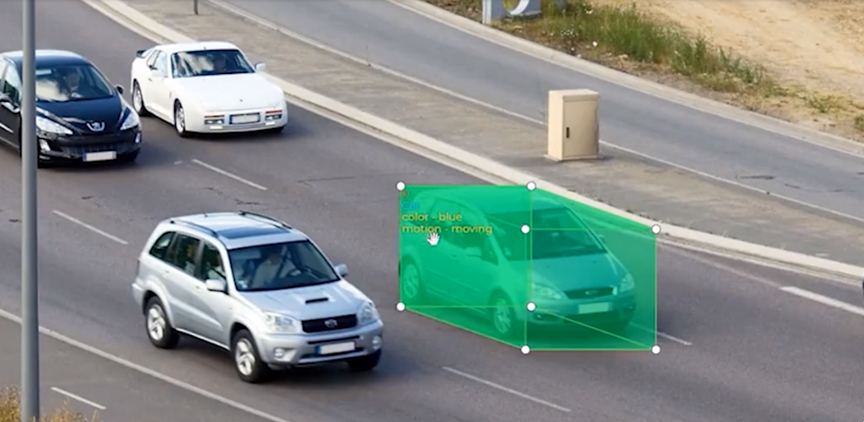

Autonomous vehicles collect data from a variety of sensors, including imagery, LiDAR, radar and ultrasound. This raw data must be annotated and labelled for making the autonomous vehicles self-reliant as they are supposed to be. Annotation and labelling tasks range from bounding box, semantic segmentation, polylines, polygons, geo annotation to key point annotation.

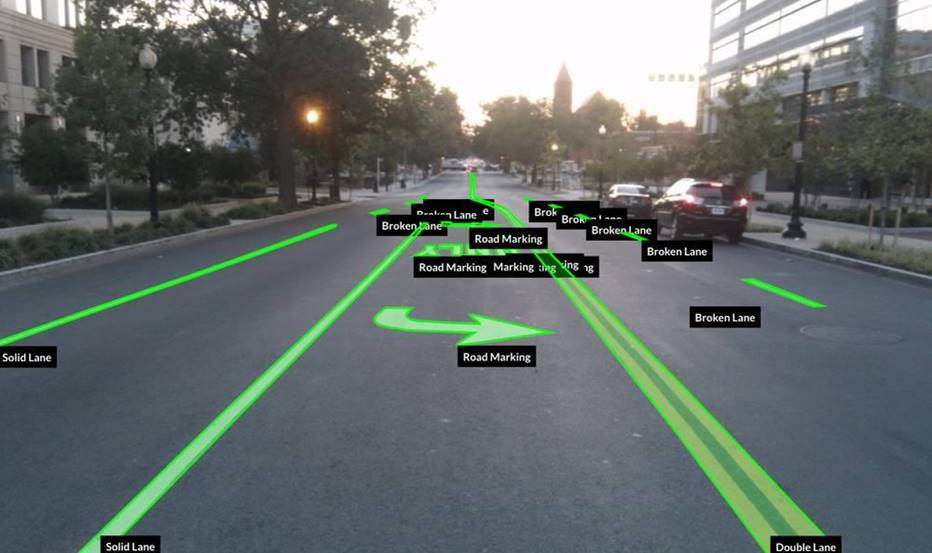

Autonomous vehicles are still working towards reaching the stage of full autonomy. There are lot of issues to be ironed out; safety being the foremost. To be able to effectively detect, track and classify objects and take informed decisions for path planning and safe navigation, algorithms that drive autonomous vehicles should be trained on the datasets. The vehicles need high performance and robust algorithms that can accurately detect other vehicles, lane markings, static and moving objects, pedestrians, traffic lights, signage etc. To achieve such high level of efficacy in detection, the algorithms need to be trained with a lot of high-quality annotated/labelled data.

The process of Data curation or Annotation involves tagging or classifying objects in each frame captured by an autonomous vehicle. This data is curated for the understanding of the deep learning model and the relevant objects are identified and tagged or labelled. The tagging of objects can be carried out by human annotators or with the help of AI or by using a combination of both. Once the contextual trained data is fed into the model, it is able to infer or detect patterns from the data and classify the objects accurately. You can expect the best results in detection, identification and classification of objects from the raw data when the intelligence of human annotators and the power of AI are combined. This is what Magnasoft specializes in.

The impactful combination of automated tools and expertise of human annotators enables Magnasoft to gain 100% accuracy in data annotation and labelling for autonomous vehicles. We specialize in enriching and customizing annotated data to meet various industry requirements. Be it enabling AVs to locate objects and boundaries like people, cars, flowers, furniture or human faces, our experts are ready to deliver accurate results to smoothen the journey of AVs. We can help create computer vision models from 2D images or videos for spatial cognition by AVs. Sometimes irregular objects cannot be accurately captured by bounding boxes. This is where, by drawing polygons, we can identify the exact shape of the object. The data becomes a useful training dataset for Machine Learning applications. To make the autonomous vehicles understand boundaries, we can help you annotate road lanes, sidewalks, highways, airstrips, rail roads, coastal lines etc. using polylines.

We can recreate the details of the road to an accuracy of 5cm and higher. Our well-defined annotation guidelines help us achieve consistency and effective outcomes in lesser time. The in-depth understanding of the field helps us to use the most appropriate tool for each annotation task. We look forward to be your ‘go-to’ partner if building high precision data for autonomous vehicles is on your mind.